Hot Aisle/Cold Aisle remains a common consideration for data center architects, engineers, and end users. Conceived by Robert Sullivan of the Uptime Institute, hot aisle/cold aisle is an accepted best practice for cabinet layout within a data center. The design uses air conditioners, fans, and raised floors as a cooling infrastructure and focuses on separation of the inlet cold air and the exhaust hot air.

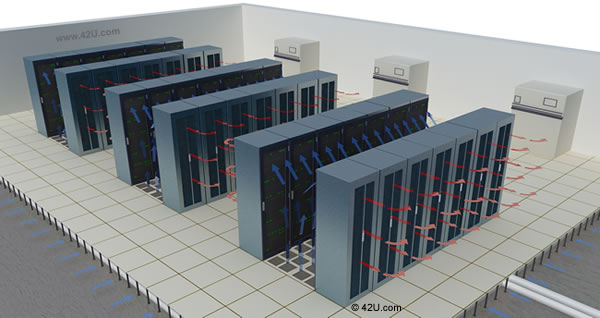

In this scheme, the cabinets are adjoined into a series of rows, resting on a raised floor. The fronts of the racks face each other and become cold aisles, due to the front-to-back heat dissipation of most IT equipment. Computer Room Air Conditioners (CRACs) or Computer Room Air Handlers (CRAHs), positioned around the perimeter of the room or at the end of hot-aisles, push cold air under the raised floor and through the cold aisle, Perforated raised floor tiles are placed only in the cold aisles concentrating cool air to the front of racks to get sufficient air to the server intake. (naturally all the servers should be mounted so that their intake is facing the front of the rack, and their exhaust is facing the rear). As the air moves through the servers, it’s heated and eventually dissipated into the hot aisle. The exhaust air is then routed back to the air handlers.

The heat removal capacity of the design is influenced by raised floor height, tile placement and perforation, air handler locations, and room architecture. Sound, integrated designs are necessary, as all of these parts must work in tandem to maintain the data center’s environmental settings.

Hot Aisle/Cold Aisle Server Rack Configuration

Early versions of server enclosures, often with “smoked” or glass front doors, became obsolete with the adoption of hot aisle/cold aisle; perforated doors are necessary for the approach to work. For this reason, perforated doors remain the standard for most off-the-shelf server enclosures, though there’s often debate about the amount of perforated area needed for effective cooling (Server manufacturers like HP utilize 65% perforation on their server cabinets, while other cabinet manufacturers tout doors with an excess of 80% perforation).

While doors are important, the rest of the enclosure plays an important role in maintaining airflow. Rack accessories must not impede air ingress or egress. Blanking panels are important as are side “air dams” or baffle plates, for they prevent any exhaust air from returning to the equipment intake (Blanking panels install in unused rackmount space while air dams install vertically outside the front EIA rails). These extra pieces must coexist with any cable management scheme or any supplemental rack accessories the user deems necessary.

The planning doesn’t stop at the accessory level. Hot aisle/cold aisle forces the data center staff to be especially detailed with spacing; each aisle must be sized to ensure optimal cooling and heat dissipation. To maintain spacing, end users must establish a consistent cabinet footprint, paying particular attention to enclosure depth. Legacy server enclosures were often shallow, ranging from 32″ to 36″ in depth. As both the equipment and the requirements grew, so did the server enclosure. A 42″ depth has become common in today’s data center with many manufacturers also offering 48″ deep versions. While accommodating deeper servers, this extra space allows for the previously mentioned cable management products, rack accessories, and rack PDUs.

Though hot aisle/cold aisle is deployed in data centers around the world, the design is not foolproof. An Uptime Institute whitepaper, written in 2002 on the design, regards heat loads of 50 watts/sq ft as significant (a far cry from the 1500 watts/sq ft touted by SuperNAP in August of 2008). Medium to high density installations have proven difficult for this layout, because it often lacks precise air delivery. Even with provisions at the enclosure (blanking panels, air dams), bypass air is common as is hot air recirculation. Hot spots occur and, more cold air is thrown at the servers, requiring excess energy at the fan and chiller levels.

Furthermore, with higher density installations, forcing > 2000 CFM through a perforated tile may be difficult, inefficient, and altogether impractical.

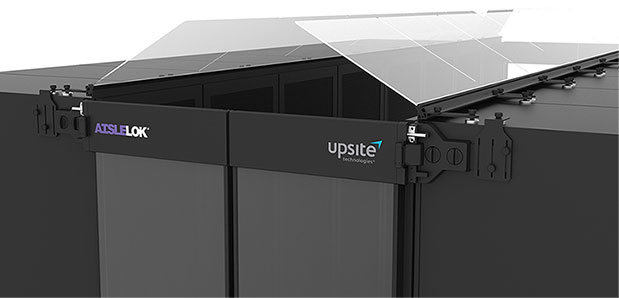

Despite this limitation of hot aisle/cold aisle, its premise of separation is widely accepted. Some cabinet manufacturers are taking this premise further, making the goal of complete air separation (or containment, if you will) a reality for the data center space.